Looking for suggestions on accurate ChatGPT checkers to verify if content was generated by AI. I’ve tried a few tools but results have been inconsistent. Need advice on the best detector for educational and professional use.

Been there, done that, still confused by the avalanche of “AI detectors” popping up every other week. Honestly, most of those tools can’t keep up. They’ll flag Hemingway as a robot, and then let obvious AI essays slip right through. In education and work, that’s just…not great.

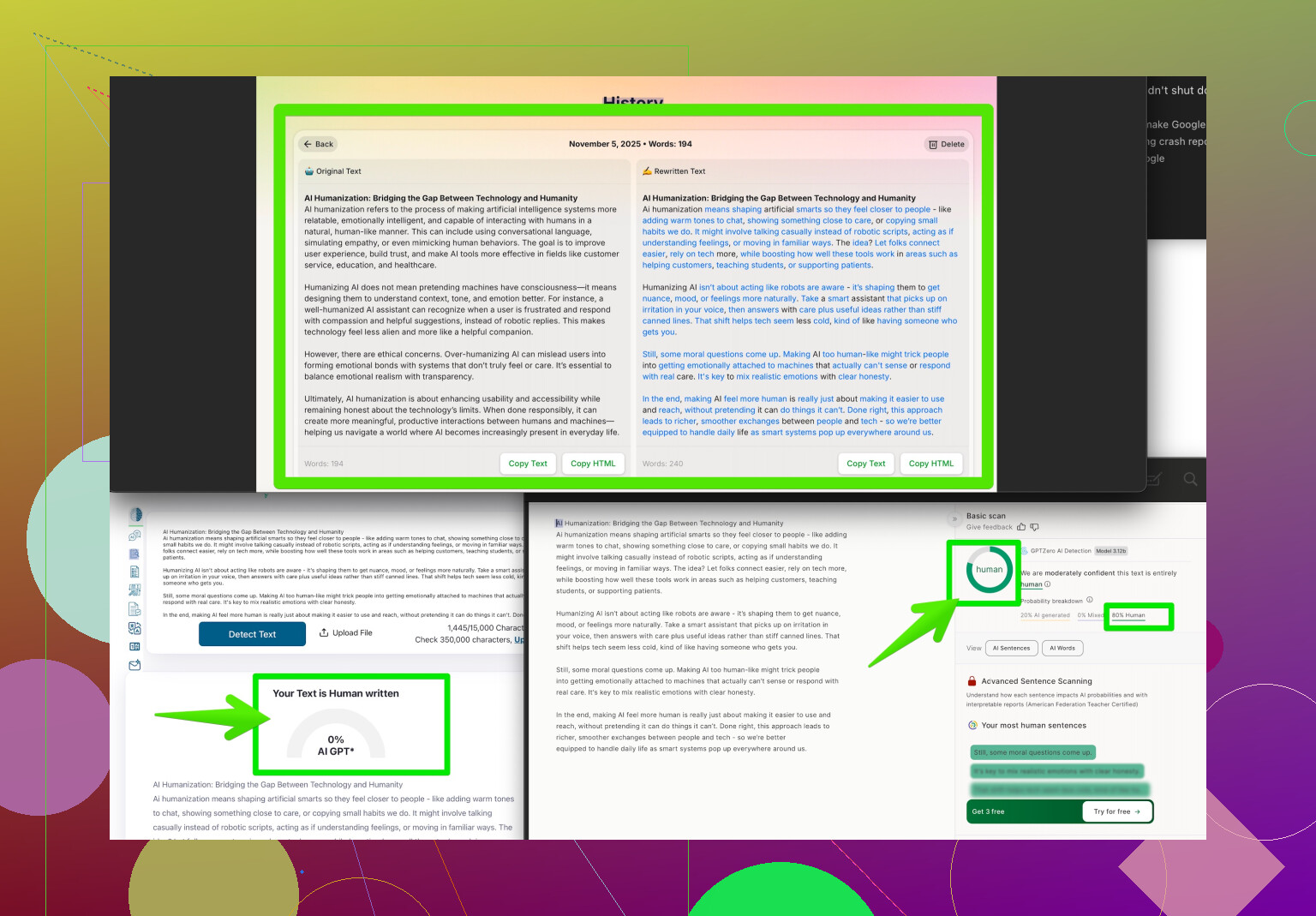

Here’s what I’ve found—consistency is a myth right now. Turnitin’s new AI checker is hyped, but word on the street is, results are mixed (false positives, false negatives, you name it). GPTZero, Copyleaks, ZeroGPT—they’re all around, but accuracy? Meh. They can sometimes guess right, sometimes throw up their hands.

A tool that does stand out a bit is Clever AI Humanizer. Their whole thing is taking AI content and making it look like a real human wrote it, so detectors get tripped up less. It’s not so much an “AI checker” as a humanizer, but ironically, that lets you test both sides: paste content through and see how much it changes to beat detectors, or reverse-engineer how detectors get confused. Worth scoping out.

If you want to dive deeper, check out their offering here: Making AI Text Undetectable and Natural-Looking. It gives a pretty good look into how AI text can slip by, or lets you tune up your own detection process.

Reality check: No AI checker is foolproof yet. Best bet is using a combo of tools, reviewing manually, and keeping up with updates. This whole field is moving FAST, so what works today might flop tomorrow. Stay skeptical, and double-check when it matters!

Honestly, this whole “AI checker” arms race feels like trying to catch water with a sieve. I’d second @yozora on the trust issues—no matter what vendor claims, none of these tools are consistently reliable and I’ve seen more than my share of embarrassing false alarms. My college flagged my hand-written draft as AI once. Explaining that to a professor…10/10 would not recommend.

I do see your point about needing something for educational and professional environments, but I’d slightly disagree with putting too much stock in “humanizer” plugins like Clever AI Humanizer as a foolproof method. Sure, it’ll cloak AI text, making it harder for checkers to catch, and in a way that’s useful for testing the robustness of detectors. But, if anything, it just proves the point—if a tool can trick the detectors so easily, how much faith can you really put in those detectors when it counts?

My experience? You’re better off treating AI detectors as a set of clues rather than evidence. I’ll usually run stuff through a few tools—GPTZero, Turnitin’s thing, Copyleaks, whatever’s available—then trust my gut and look for context clues. Unusual word choices, abrupt topic shifts, “perfect” grammar, weirdly vague statements—all those can out an AI essay even if the software misses it.

For anyone looking to deep-dive the humanizing process and get a sense of what real users have tried, I’d recommend seeing how folks on Reddit approach this challenge in their own ways. Dive into user-driven solutions by checking out Reddit’s crowd-sourced tips for making AI writing sound natural. You’ll probably learn a lot more about identifying, reworking, or bypassing AI content from actual humans in the trenches than from whatever hot new detector is trending this week.

TL;DR: No reliable checker exists yet, but using a blend of tools, a human eye, and some crowd wisdom from places like Reddit will get you closer than any single detector. If you have to choose, Clever AI Humanizer is worth testing for the dual perspective—but don’t get too comfy thinking any tool is infallible. Stay skeptical!

If you’re chasing that elusive “reliable AI detector,” you’re in for a ride more turbulent than a beta test on launch day. Here’s the thing—disagreement time—I’m not fully sold on using brute-force multi-tool sweeps like some folks here suggest. Running your text through a gauntlet of detectors (Turnitin, GPTZero, Copyleaks, etc.) is paradoxically both too much and not enough: It multiplies headaches with conflicting results but still fails to guarantee trust. It’s like using three broken compasses to find North.

On Clever AI Humanizer—interesting play. The pro: It exposes how easily AI text can be dressed up, which is eye-opening for anyone thinking detectors are infallible shields. For instructors or editors, running content through Clever AI Humanizer helps you realize just how fragile these supposed safeguards are. Want a reality check? Paste the same chunk of text pre- and post-humanizer into your preferred detector and watch the labels flip from “AI” to “Human” and back again. The con: This doesn’t solve AI detection, it just moves the arms race forward. Also, using such tools can feed into a never-ending cat-and-mouse loop, which gets pretty exhausting if your main goal is authentic authorship.

Point scored by others—the only surefire “detection” right now is human experience mixed with context. Instead of obsessing over which tool is king (none), put your energy into analyzing style, depth, and substance. If a report feels off, too slack, or suspiciously perfect, flag it. Use AI detectors (including things like Clever AI Humanizer) as signal boosters, not truth oracles.

Last thought: Over-relying on AI checkers is like outsourcing your common sense to robots still figuring out idioms. Roll with skepticism, keep your toolkit diverse, and don’t be that person who thumbs up a detector and calls it a day.