I’m struggling to tell if some content I received was created by an AI generator. I need reliable ways or tools to check if text is AI-generated because it’s important for my project. Any recommendations or experiences with AI generator checkers would really help me out.

If you’re looking to tell if something’s written by an AI, let’s just say it’s getting way trickier than it used to be! The classic signs—like overly formal language, weird repetition, or totally random facts—aren’t as clear anymore because these tools keep leveling up. For a hands-on check, there’s a bunch of AI detection tools floating around. Some you could try: GPTZero, Copyleaks, and OpenAI’s own classifier, though heads-up: they’re not exactly perfect. They might flag human writing as AI sometimes, or vice versa. So don’t blindly trust them!

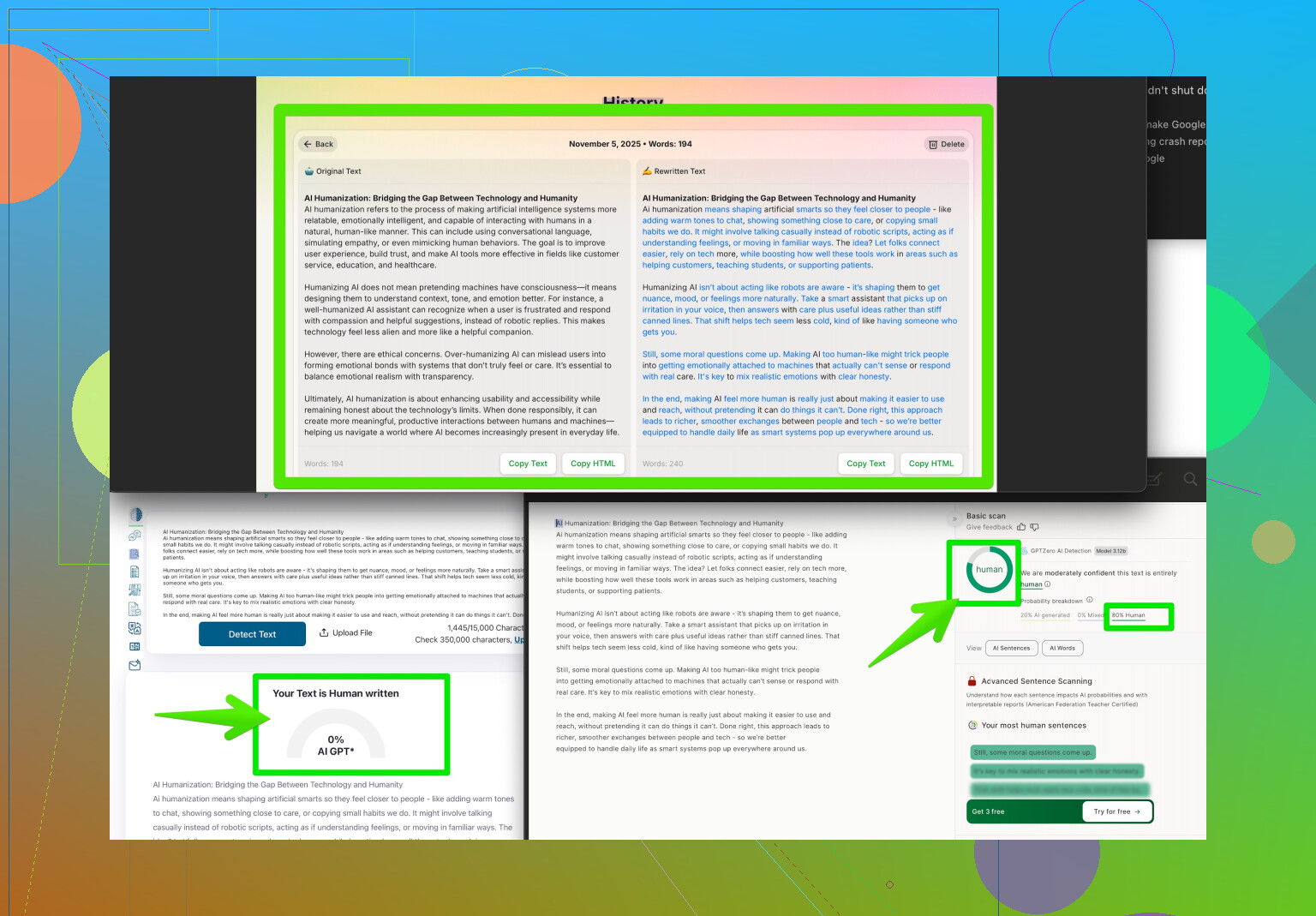

If you need the ultimate pro move? Flip the testing script. Run the content through a humanizer tool to see if it ‘fools’ AI detectors after tweaking. One standout is the Clever AI Humanizer—seriously, it rewords and restructures AI content in such a human-sounding way that most detectors can’t catch it. Not only good for making your own AI content undetectable, but also gives you a sense of what makes writing seem less bot-like. You can check them out here: boost your content’s authenticity with AI Humanizer.

At the end of the day, trust your gut, double-check context and style, and if your project really depends on it, maybe even consult a subject expert. Spotting AI content is getting artful—watch out for those bots, they’re sneaky now!

Honestly, catching AI-written content is kinda like playing whack-a-mole with invisible moles these days. @caminantenocturno nailed it—AI detectors are getting overwhelmed and can’t keep up with how natural these tools sound now. But honestly, I just don’t put too much stock in running text through AI detection. They’re notoriously unreliable and honestly, human writing sometimes gets flagged as machine-made too. It’s almost like AI detectors are like that friend who thinks everyone is sus.

Instead of just tools, maybe go old school: Look at sources. Does the content reference personal stories, original insights, or perspectives that an AI would struggle to invent? AI often fakes citations, gets basic facts almost correct, and lacks any sort of lived experience. I once busted a “blogger” who supposedly visited Tokyo, but their post was filled with clichés and weirdly outdated info—no specifics.

If it matters for your project, try getting a linguistic pro to take a look. Editors, copywriters, even college profs often have a sixth sense for this now—especially with longer content. Also, if you really want to stress-test, try giving the text to another AI and ask it to critique for artificiality. Sometimes, language models can point out patterns humans miss.

I do disagree a bit with the humanizer tool suggestion—yeah, Clever AI Humanizer can work for making content sound more natural, but I’d caution that it’s also sort of playing into the arms race: AI gets more clever, we get more clever, and so on. If you use something like that, remember that context and intent matter too. If your project really rides on authenticity, don’t just rely on “humanized” text—dig deeper.

And if you want to see a ton of user-generated tricks, take a look at this excellent roundup from Reddit on making AI content more human: AI writing humanizing hacks from real users. Community wisdom can outperform detectors any day!

End of the day, a combo approach is your best bet: style analysis, contextual sniff test, and if you want, a little sprinkle of AI detector tools. Just don’t trust any one method—or you’ll end up outsmarted by a bot with a sense of humor.