I recently went through a HIX bypass review and the outcome wasn’t what I expected. I’m confused about how the decision was made, what criteria were used, and whether there’s any way to appeal or provide more information. Can anyone explain how HIX bypass reviews usually work, what factors matter most, and what steps I should take next to resolve this?

HIX Bypass Ai Humanizer Review

I tried HIX Bypass after seeing their homepage shouting about a “99.5% success rate” and throwing around logos from Harvard, Columbia, and Shopify. The whole thing looked solid at first glance, so I figured I would run a few real tests before judging it.

Here is what happened.

Test setup and results

I took two different AI generated samples, ran them through HIX Bypass, then checked the outputs with a few detectors:

-

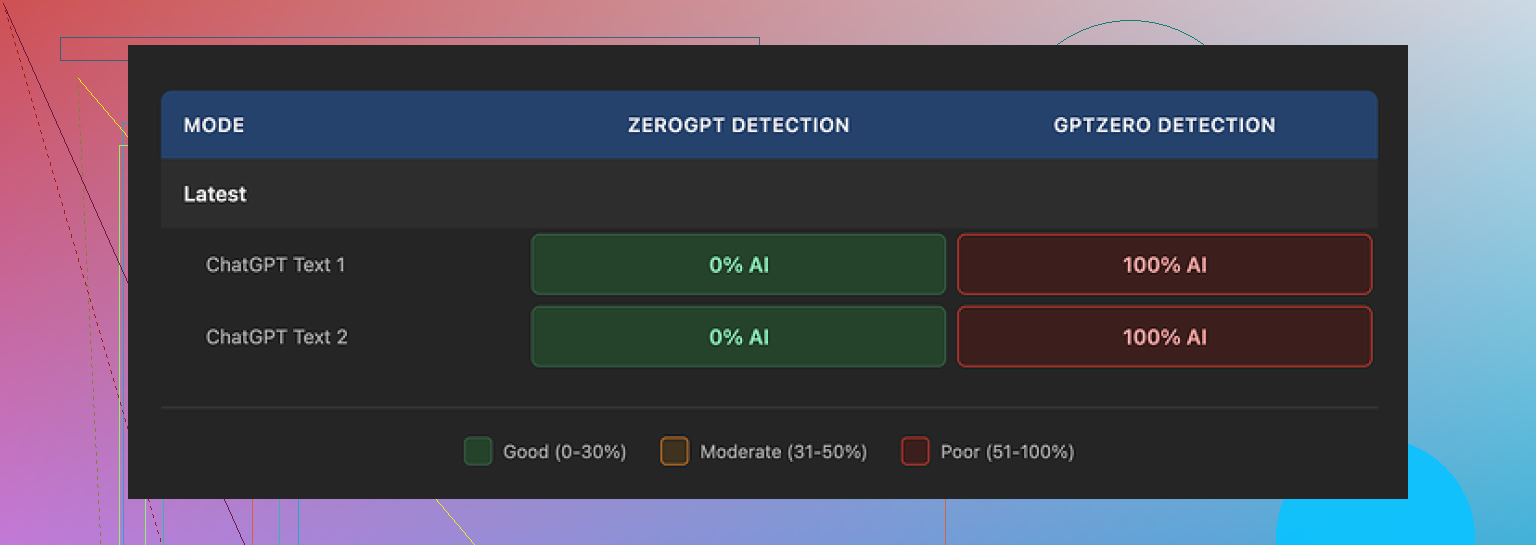

ZeroGPT

Both rewritten samples went through ZeroGPT without any problems. It reported them as human text. -

GPTZero

This is where things broke. GPTZero flagged both outputs as 100% AI written. Not borderline. Full AI score on each test. -

HIX’s built in detector dashboard

Their internal panel confidently labeled the results as “Human-written” across most of the integrated detectors. That looked nice on screen, but it did not match what GPTZero reported at all.

Screenshot from the detector panel:

So in practice, the “99.5% success” on the site did not line up with how the text behaved on GPTZero in my tests.

Writing quality

Ignoring detectors for a second, I checked how the rewritten text read on its own.

If I had to score the writing, I would give it about 4 out of 10. Here is why.

• It kept several em dashes from the original AI text, which is one of those little tells detectors and editors often watch for.

• One sentence turned into a broken fragment, like the model lost track of what it was doing halfway through.

• In one sample, the tool wrapped an entire sentence in square brackets for no clear reason, which felt like a formatting glitch, not a style choice.

The rewrites did not look like anything a careful human editor would send to a client. It looked closer to slightly reshuffled AI output.

Free tier and refund trap

The free plan gave me 125 words total for the whole account. That is not 125 words per run, that is 125 words for everything. You burn through that in a few tests.

The paid side has a 3 day refund window with a catch. If you process more than 1,500 words, you are no longer eligible for a refund. So if you run a couple of long articles to see if the tool works for your use case and you cross that limit, the test itself locks you out of getting your money back.

For anyone trying to evaluate the tool seriously, that policy feels very tight.

Pricing and terms

On paper, the pricing looks nice. The “Unlimited” annual plan comes out to about 12 dollars per year. That sounds generous, until you read the terms.

A few things stood out when I went through their terms of service:

• They give themselves the right to change usage limits even after you pay. So “Unlimited” is more of a marketing title than a hard guarantee.

• They grant themselves broad rights over any content you submit through the tool. That is something you need to keep in mind if you feed in client work, drafts under NDA, or anything sensitive.

• If you are on the free tier, your inputs may be used for training their AI models. That is in line with a lot of tools right now, but it matters for anyone dealing with proprietary material.

So the price looks low, but the strings attached make it less attractive if you care about control over your text.

Comparison with another tool

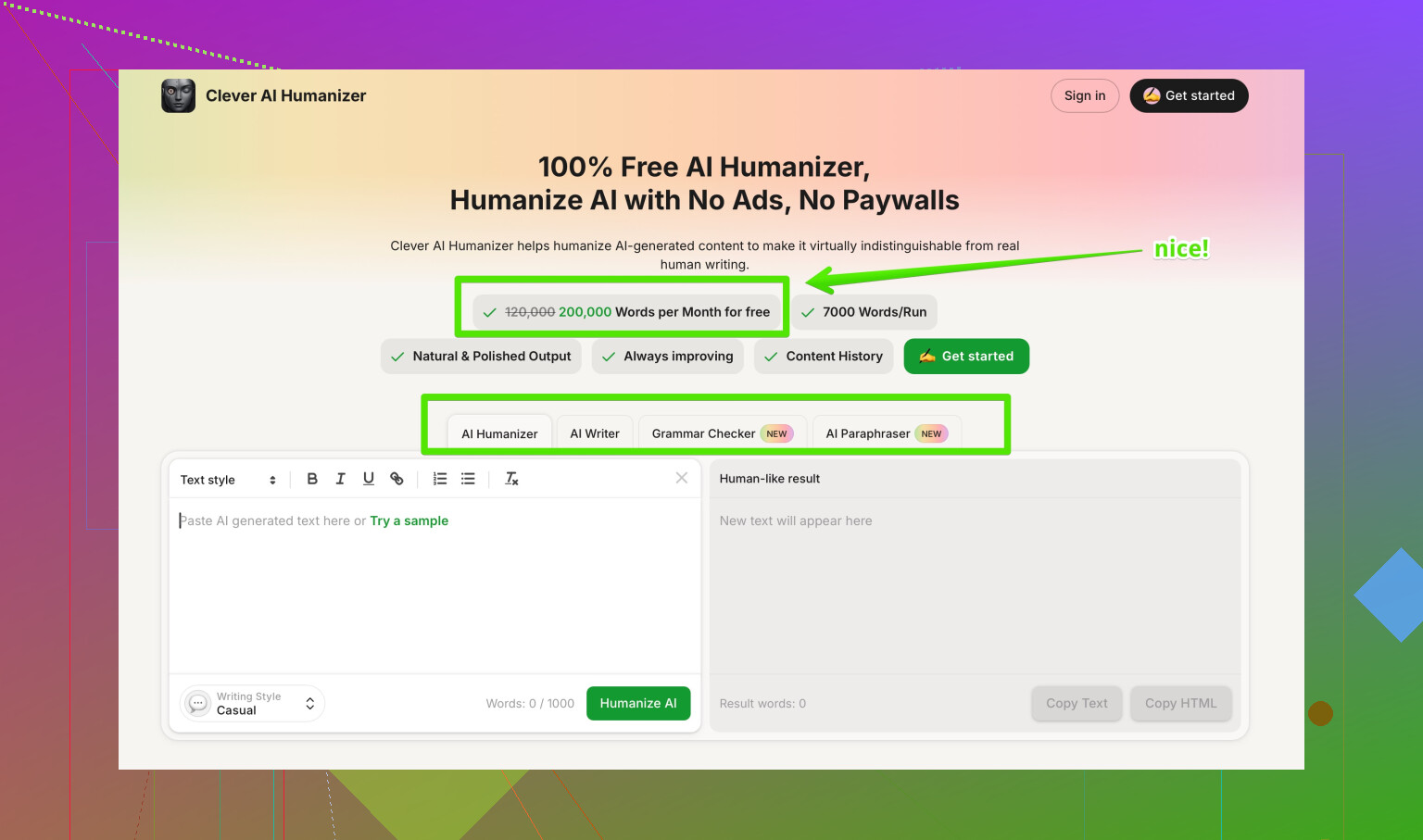

After HIX Bypass, I tried a different tool that people kept mentioning, Clever AI Humanizer. The review and proof thread I used as a reference is here:

Using the same rough approach, I pushed AI text through Clever AI Humanizer and checked detectors again. The rewrites felt closer to normal human writing and the scores were better, and I did not pay anything for that.

So for my use case, where I wanted something that produces cleaner rewrites and holds up better against detectors like GPTZero, Clever AI Humanizer ended up ahead, without needing to commit money or deal with tight refund conditions.

HIX Bypass reviews confuse a lot of people, so you are not the only one. The process feels opaque and the marketing does not help.

Here is what is likely going on, based on tools like this and what @mikeappsreviewer already tested.

- How the “review” decision is made

HIX is not running one universal standard. It leans on a few things.

• Internal detector panel

They aggregate several detectors in their dashboard. Many are softer than GPTZero. If 3 tools say “human” and 1 says “AI”, they still present a positive result in their UI.

• Detector choice matters

GPTZero is stricter than ZeroGPT in a lot of cases. In Mike’s test, ZeroGPT said “human”, GPTZero said “100 percent AI”. HIX shows the friendly ones in the dashboard. The strict ones are the ones your teacher or client might use.

• Text patterns

Detectors key on features like:

- Overuse of similar sentence lengths

- Predictable word choices

- Repeated punctuation patterns

- Odd fragments or bracketed text

If HIX keeps things like em dashes, uniform rhythm, or bracket artifacts, detectors score it high for AI.

So when your “bypass review” fails, it is often because the output still looks like AI to one of the tougher detectors, even if HIX’s own panel says it looks fine.

- Likely criteria behind the scenes

They do not publish a full checklist, but from behavior, you can assume these factors:

• Perplexity and burstiness

Low variance in word choice or sentence structure raises AI suspicion.

• Structure and formatting

Broken sentences, weird brackets, strange paragraph breaks, and overly neat structure all flag risk.

• Length and density

Very dense, compact explanations in short space often look like AI. Human text tends to wander a bit more and has small imperfections.

Your review result probably combined these signals and hit some internal threshold.

- Why the outcome felt off

A few reasons this feels unfair from the user side.

• The “99.5 percent success rate” line primes you to expect automatic passes. That stat is marketing, not an audited number.

• Their dashboard is tuned to look reassuring. If your teacher or platform uses GPTZero, and HIX prioritizes detectors that agree with it instead, your real world result will conflict with what you see on the HIX site.

• Short trials. With 125 total free words, you do not get enough runs to learn how to adjust your text. You hit the limit before you get a feel for where it fails.

On this I slightly disagree with @mikeappsreviewer. I do not think the tool is useless, but it looks misaligned with high stakes use, like school submissions or client work that goes through strict detection.

- What you can do next

If you want to push back or understand the decision better, here are practical steps.

A. Recreate the review yourself

Run your text through:

• GPTZero

• ZeroGPT

• Another detector of your choice

Compare:

• If everyone flags AI, the problem is with the text.

• If only one flags AI, and that is the one your teacher or platform uses, you need to tune for that specific tool.

B. Contact HIX support with specifics

Do not send a vague “why did this fail” message. Send:

• The input text you used

• The output from HIX

• Screenshots or copy of detector results from GPTZero or wherever it failed

• The context, like “used for school assignment, checked by GPTZero”

Ask clear questions:

• Which detectors are used in their “review” decision.

• Whether they have any guidelines to reduce AI detection for GPTZero specifically.

Do not expect much detail, but sometimes support gives at least some practical tips.

C. Clean up typical AI tells manually

Even if you keep using HIX, treat it as a draft tool, not a final pass. After running your text through it:

• Rewrite topic and conclusion sentences in your own words.

• Shorten a few long sentences and extend a few short ones, so rhythm varies.

• Change transition words, like “however”, “thus”, “therefore”, to your own style.

• Remove odd brackets and weird punctuation.

• Add 1 or 2 small, specific details from your experience, like dates, places, or tools you used.

Small manual edits often drop AI scores more than one more automated pass.

- Appeal or provide more info

If you are talking about a platform that used HIX Bypass on your work, and not HIX itself, you have a different route.

For schools or clients:

• Ask what detector or service they used.

• Ask for the report or at least the key score.

• Show your drafts, notes, or prior versions to prove process.

• Offer to rewrite a section live or answer oral questions about the content.

Evidence of your own process helps more than arguing about tools.

- Alternative option

Since you mentioned confusion about criteria and results, it might help to compare with another tool. As Mike mentioned in their post, Clever AI Humanizer handled detectors like GPTZero better in their tests.

If you want something to run pure AI text through for experiments, without tight word caps or tricky refunds, you can try Clever AI Humanizer here:

improving AI text to look more human

Still, do not rely on any tool alone. Use it to get a starting point, then manually edit for voice, detail, and structure.

- Quick SEO friendly version of your situation

“HIX Bypass review outcome explained: why your AI humanizer result failed, what criteria AI detection tools like GPTZero use, how HIX Bypass evaluates text, and how to appeal a failed review or add more information to prove your content is human written.”

If you share the exact text or the detector report, people here can point to specific lines that trigger flags and suggest exact rewrites.

Yeah, the “HIX bypass review” thing is way murkier than their marketing makes it sound.

What’s probably happening is this:

-

The “review” result is mostly optics

HIX is presenting a friendly dashboard and a success label that tries to summarize multiple detectors at once. It is not some official, external certification.

If even one strict detector in the background (or on your school / platform side) flags your text, their nice green “human” label suddenly means nothing in practice. That is why your outcome feels disconnected from what they advertise. -

Criteria they are likely using

This part I slightly disagree with @mikeappsreviewer and @ombrasilente on. It is not just about which detector they pick. Tools like this usually blend a few signals internally, for example:

• Perplexity and burstiness scores from standard language models

• Overly consistent sentence length and structure

• Phrase repetition and template-like transitions

• Formatting quirks from AI outputs, like neat bullet styles or robotic paragraph symmetrySo the “decision” is less “pass or fail one detector” and more “aggregate some fuzzy metrics and show you a reassuring stamp.” That is why you do not get a clear rule set or explanation.

-

Why they don’t explain the decision clearly

If they were fully transparent, people would just tune text to the exact thresholds and auto-bypass their own system. Also, those criteria shift over time as detectors change. So the vagueness you are feeling is partially by design, not just bad UX. -

About appealing or giving more info

Here is where I think folks are a bit too optimistic about support:• HIX support is not going to run a custom forensic analysis of your case. You can send them the text, screenshots, detector results and context, but expect a generic answer. They are not going to overturn a “failed” bypass in a way that convinces your school or client.

• There is no formal “appeals court” inside HIX. At best, they give you suggestions like “try rewriting more manually” or “run a shorter chunk.”The real appeal channel is not HIX. It is whoever judged your work as AI written.

For that side, what actually helps is:

• Showing earlier drafts, notes, outlines or version history.

• Explaining your research process and sources.

• Being willing to talk through the content live or write a small portion on the spot.Arguing about HIX’s decision logic almost never changes a professor’s or client’s mind.

-

What to actually do differently next time

Instead of trusting HIX’s “review” as a final verdict, treat it like a noisy hint. Then:• Run the final text directly through the same detector your school or platform uses if you know it. That matters more than HIX’s panel.

• Do a genuine manual pass: change intro / conclusion, alter transitions, add personal, specific details and reorder points in a way that reflects how you naturally think. Small messy touches help more than one more automated rewrite.

• Avoid overpolished, hyper-compact explanations. A bit of digression, a missed “perfect phrasing” here or there, actually reads more human. -

Tool alternatives, realistically

Since you are already in the AI-humanizer space, it makes sense to compare. @mikeappsreviewer and @ombrasilente already mentioned running parallel tests. From a purely practical standpoint, a lot of people have had better luck with Clever AI Humanizer for stricter detectors like GPTZero, especially when they combine it with real editing. If you are experimenting, running a chunk through Clever AI Humanizer, then manually tweaking, often gets you a cleaner baseline without messing with tricky refunds and tight free caps. -

About that Reddit phrase you mentioned

A cleaner, SEO friendly version for what you are talking about would be something like:

in depth community discussion of top AI humanizer toolsThat kind of wording catches both “best AI humanizer” and “Reddit review” intent, and is easier for people to actually click and understand.

Bottom line: HIX’s “bypass review” is more like a marketing-facing score than a legal verdict. Use it as one input, not the truth source, and focus your appeals and evidence on the humans who are judging your content, not the tool that gave you a green or red label.

Short version: HIX’s “bypass review” is closer to a pretty status light than a real, explainable decision system. You did not miss a secret rulebook. There really is not one publicly available.

Let me add a different angle than what @ombrasilente, @sternenwanderer and @mikeappsreviewer already covered:

-

The real problem is mismatch of expectations, not just detectors

Everyone is focused on “which detector flagged me,” but in practice the bigger issue is that HIX markets itself like a guarantee. That clashes with how schools and clients behave.

Teachers and platforms treat any detector flag as “suspicious” and they often lean on their own judgment, not HIX’s review stamp. So even if HIX internally thinks your text is fine, the human on the other end does not care about that label. -

Why your case feels arbitrary

The others already talked about perplexity and burstiness. I slightly disagree that this is the core for you. What usually bites people is context.

Examples:- You wrote faster than you normally do, so the style shift looks huge versus your past work.

- The topic is very “AI friendly” like generic essays, product overviews, basic how to guides. Detectors lean harder there.

- Your teacher or platform already suspected AI usage in the class, so they were primed to interpret any vague signal as proof.

That context is invisible inside HIX, which is why their “review” can pass while your real life review fails.

-

What you can actually clarify or appeal

Not repeating the same “email support” route here. Instead, focus on what you can explain in a way that humans understand:- Show how long you spent on the piece. Screenshot timestamps or version history if you have them.

- Point out specific sentences you changed by hand after using any tool. Concrete edits are more convincing than “I used a humanizer, trust me.”

- Offer to write a short related paragraph live, then compare style. If it is close, that undercuts a pure detector-based accusation.

It is not about convincing HIX. It is about giving the reviewer a story that fits the evidence better than “they pasted text from a model.”

-

If you keep using tools, change the order of operations

Instead of:

AI model → HIX Bypass → hope

Try something like:

AI model → your heavy manual rewrite → quick pass through a tool → your light cleanup.Put your own editing before the humanizer, not after. The text will already look less like stock AI, and the tool only has to smooth edges, not reinvent the whole thing.

-

Where Clever AI Humanizer fits in

Since you asked how to move forward, it is fair to look at alternatives like Clever AI Humanizer, especially if you are worried about strict detectors.Pros of Clever AI Humanizer:

- Tends to inject more varied sentence structures so your text is less rhythmically flat.

- Often handles detectors that key on repetitive phrasing a bit better.

- Good for turning obviously robotic drafts into something you can stand to edit manually.

Cons of Clever AI Humanizer:

- Still not a magic shield. If your topic, timing or prior work look suspicious, detectors plus humans can still question it.

- Can drift from your natural voice, so you must rewrite sections to sound like you again.

- Like any tool, if you overuse it on everything you write, your “new normal style” may diverge from old assignments or emails, which can raise new flags.

Used correctly, it is less about “bypassing” and more about getting a readable draft that you then personalize.

-

How this differs from what others already said

- I agree with @mikeappsreviewer that HIX’s marketing sets people up for disappointment, but I do not think switching tools alone solves the root problem. You have to align your process with the expectations of whoever grades your work.

- I am slightly more skeptical than @ombrasilente about relying on integrated dashboards at all. They are curated views, not reality.

- Compared to @sternenwanderer’s more detector focused analysis, I would say human context and your own writing history matter just as much as technical scores.

If you post anonymized snippets of the text that failed plus one older piece you wrote without any tools, people can often spot style mismatches that explain why the reviewer did not trust HIX’s green light, even when the detectors looked “fine” in their panel.