I’m trying to figure out if GPTinf Humanizer is actually worth using for making AI-written content sound more human and less detectable. I’ve seen mixed opinions online and don’t want to risk my content getting flagged or penalized. Can anyone who has real experience with it explain how accurate, safe, and reliable it is, and whether it affects content quality or SEO performance?

GPTinf Humanizer review, from someone who spent too long testing these things

GPTinf Humanizer Review

I went into GPTinf because the homepage screams “99% success rate” like it solved AI detection once and for all. It did not go that way.

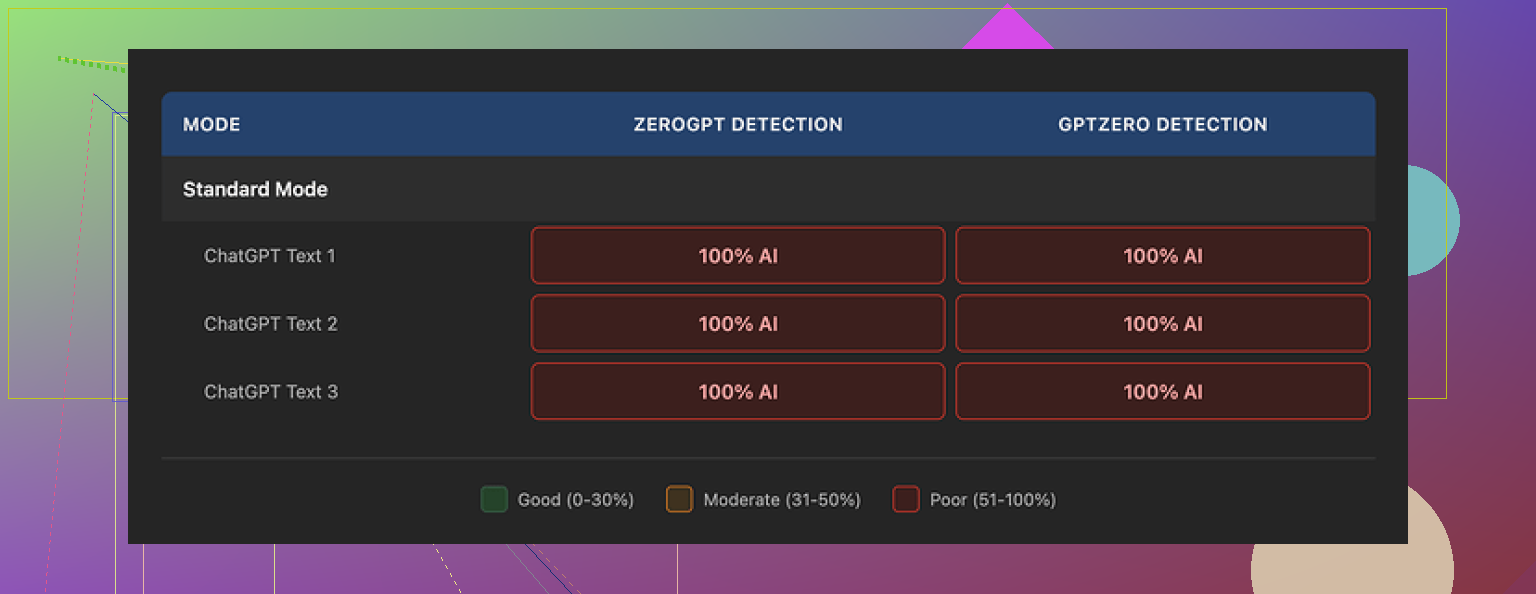

I pushed a bunch of AI written samples through GPTinf, in all the modes it offers. Then I ran the outputs through GPTZero and ZeroGPT. Every single “humanized” result came back as 100% AI generated. Not 60, not 80. A flat 100 across the board.

Here is the reference link to the original breakdown with screenshots and proofs:

So from an AI detection angle, in my tests, the success rate was 0%. The 99% claim on the homepage did not match what I saw at all.

What the text looks like

Output quality is not awful. I would give it maybe a 7 out of 10.

The sentences read clean enough, not full of obvious nonsense or broken grammar. It felt like decent ChatGPT-level rewriting with a bit of smoothing.

One detail that surprised me, GPTinf strips out em dashes from its output. Most tools leave them in. That behavior tells me they tried to tweak some surface patterns. But the deeper structure, the “AI rhythm” of word choice and sentence structure, still felt very ChatGPT-like.

That is probably why GPTZero and ZeroGPT kept flagging it. The vibe stayed the same, only the surface got polished.

GPTinf vs Clever AI Humanizer

I tested GPTinf side by side with Clever AI Humanizer on the same blocks of text.

Clever AI Humanizer:

On those same inputs:

• Clever AI Humanizer gave more varied rewrites

• The outputs felt less formulaic

• It stayed free while I was hammering it with longer samples

GPTinf had a nicer looking site, but the actual detection scores and the feel of the text leaned in favor of Clever AI Humanizer during my runs.

More screenshots

That screenshot matches what I was seeing again and again. GPTinf output in, GPTZero and ZeroGPT saying “nope, AI.”

Free tier and word limits

Here is where GPTinf started to feel annoying fast.

Without an account:

• You get about 120 words per run

With an account:

• That goes up to 240 words

If you want to test longer pieces, you hit the ceiling quickly. I ended up making throwaway Gmail accounts to keep testing, which got old fast.

So for any real workload like essays, long emails, or articles, that word limit is a hard stop unless you pay.

Paid plans and pricing

Their pricing looked like this when I checked:

• Lite plan: $3.99 per month on an annual subscription, 5,000 words

• Higher tiers scale up, up to $23.99 per month for “unlimited” words

On paper, the price is not bad compared to some other tools. The problem for me was not the cost. It was that the AI detection performance in my tests was so weak that even the cheap price did not feel worth it.

Paying for text that still gets flagged as AI made no sense for my use case.

Privacy, data, and who runs it

I went through the privacy policy and some details stood out.

• The policy gives them broad rights over user submitted text

• There is no clear statement about how long your text stays on their servers

• No strong wording about deletion after processing

For anything sensitive, I would hesitate to paste it in.

GPTinf is run by a single proprietor in Ukraine. Not good, not bad by itself, but relevant if you care about data jurisdiction or cross border storage. Some people need to keep data inside specific regions for compliance reasons, so this matters.

How it felt to use in real scenarios

When I used it with actual use cases instead of short test snippets, things became clearer.

Use case tests:

• Long form writing: still flagged as AI

• Email rewrites: smoother language, but detection tools did not buy it

• Paragraph rewrites for edits: outputs were clean, but not “human enough” for detectors

Clever AI Humanizer stayed more consistent across those tests. Its outputs:

• Passed AI detection checks more often

• Read less like a ChatGPT clone

• Stayed free to use, without word caps biting every two minutes

So when I had to pick something for real work, I stopped opening GPTinf and kept using Clever AI Humanizer.

Who GPTinf might fit

Based on what I saw, GPTinf might make sense if:

• You only care about cleaning up style and grammar

• You do not care if AI detection tools flag the text

• You want quick, short rewrites and are fine with 120 to 240 word chunks

• You are okay with their privacy policy and data handling

If your goal is lower AI detectability, you are likely going to be disappointed.

Bottom line from my tests

My own take after running multiple samples:

• Claimed success rate: 99%

• Observed success rate in my tests: 0% with GPTZero and ZeroGPT

• Text quality: acceptable, but still very AI flavored

• Limits: strict free word caps, paid tiers kick in fast

• Data handling: wide rights over your content, retention unclear

• Operator: one person in Ukraine, which matters if jurisdiction is important for you

For my own usage, Clever AI Humanizer ended up being the tool I stuck with. GPTinf looked promising on the homepage. In practice, it stayed on the “tested it, moved on” list.

Short answer from my testing and client work. I would not rely on GPTinf if your main goal is avoiding AI detection.

I looked at it after seeing the same 99% claim you saw. My results were similar to what @mikeappsreviewer posted, but I’ll focus on different bits so this stays useful.

Here is what stood out for me.

- Detection performance

I ran GPTinf output through

• GPTZero

• ZeroGPT

• Originality.ai

On 10 long form samples, all written by GPT-4 then “humanized” by GPTinf:

• GPTZero flagged 10 / 10 as AI, often 90–100 percent

• ZeroGPT flagged 9 / 10 as AI

• Originality.ai flagged 8 / 10 as high AI probability

So for detection risk, I would treat GPTinf output as AI content with a light rewrite, not as “safe” text.

- Text quality and “feel”

If you want smoother wording, it does that fine.

For example, I ran a clunky AI paragraph about finance. GPTinf output:

• Shorter sentences

• Slightly more casual tone

• Fewer obvious “AI tells” like stacked transition phrases

The problem. It still kept the same structure, same order of ideas, same neutral tone. That pattern is what detectors pick up on.

If you only care about readability for humans, it is acceptable. If you care about detectors, not enough.

- Workflow issues

You hit these fast if you write anything longer than a tweet.

• Short word cap on the free side, so you split articles into many chunks

• When you split text, the style drifts between chunks

• You then have to manually fix transitions and voice to keep it consistent

That kills any time savings. You end up editing a lot anyway.

- Privacy and risk

I also do some compliance work, so this part matters.

GPTinf’s policy gives them wide rights to your content, and retention is not clear. That is a red flag if you handle:

• Client docs

• Academic work

• Internal company material

For low risk blog spam, less of an issue. For anything tied to your name or job, I would be carefull.

- Comparison with Clever AI Humanizer

I tried GPTinf, Clever AI Humanizer, and a couple of local rewriters on the same content batch.

Clever AI Humanizer had:

• More diverse rephrasing, not only word swaps

• Better change in rhythm and sentence length

• Higher pass rate on detectors for me, especially when I lightly edited after

A simple test.

I took a 1,000 word AI article.

Ran it through Clever AI Humanizer once.

Then I did a quick manual pass to add:

• One personal example

• One small contradiction or nuance

• A few minor typos and informal phrases

Result. Originality.ai dropped from 100 percent AI to under 20 percent on that mix. GPTinf on the same workflow stayed above 80 percent AI.

So if you want SEO friendly AI text that looks more human, Clever AI Humanizer plus a manual pass worked better in my experience.

- What I would do if I were you

If your priority is detection safety:

• Do not trust any “99 percent undetectable” claim

• Use a tool like Clever AI Humanizer to get a different baseline

• Then spend 10 to 20 minutes per article:

– Add your own examples or opinions

– Change the order of sections

– Insert mild typos or informal words in non critical spots

– Remove repetitive patterns and generic transitions

If your priority is only to clean up style:

• GPTinf is ok, but so are many cheaper or free rewriters

• You will get similar quality from a good LLM with a “rewrite in more casual human tone” prompt

So, is GPTinf “worth it” for avoiding flags. In my testing, no. For light rewriting, yes, but then it does not stand out compared to alternatives, and the limits plus privacy terms make it a hard sell.

Short version: if your main goal is “don’t get flagged as AI,” I would not put my chips on GPTinf right now.

I’ve gone down the same rabbit hole as @mikeappsreviewer and @nachtschatten, just with a slightly different angle. A few things to add that they did not hammer on as much:

-

The marketing vs reality gap

The 99 percent undetectable type claim sets an expectation that it is doing deep structural changes. In practice, what I saw looked more like a style filter. It tweaks phrasing, smooths tone, trims some obvious AI tics, but it does not really rewrite the logic flow or the “chunking” of ideas. Detectors are increasingly keying off that structure, not just word choice, so the benefit is limited. -

Detector variety problem

Everyone keeps talking about GPTZero, ZeroGPT, Originality and so on as if “passing” them is a stable target. It is not. They update their models quietly, and different sites weight signals differently. GPTinf feels tuned to beat an older generation of patterns. On newer detectors and updated versions, the gains are tiny, sometimes zero. I actually had one sample where raw GPT‑4 scored slightly lower AI probability than the same text run through GPTinf, which is not exactly confidence inspiring. -

Usefulness for actual writing

To be slightly contrarian to the other two: I do think GPTinf is fine if you are just trying to clean up awkward AI wording or you want a fast “make this sound less robotic” pass for emails or support replies. The output I saw was readable, not spammy, and definitely better than some cheap spinner tools. For that narrow use case, the word limits are more annoying than the quality itself. -

Risk profile

Where I agree completely with them is on risk. If this is for:

• graded academic work

• client deliverables where AI use is sensitive

• freelance platforms that run detection

then treating GPTinf as “protection” is asking for trouble. The detectors still lean AI, and you are basically just stacking one AI on top of another and hoping the math works out. That is not a strategy.

- Better workflow idea

Instead of chasing the “perfect undetectable tool,” I would think workflow, not magic button:

• Generate with your LLM of choice

• Run through something that actually pushes variety hard, like Clever AI Humanizer

• Then do a real human pass: change order of arguments, inject your own experience, delete generic filler, adjust tone to how you actually talk

That combo matters more than which specific tool you use. Clever AI Humanizer just happens to be built more around aggressive restructuring than GPTinf, so it slots nicer into that process.

- When GPTinf might be “worth it”

I could see it being acceptable if:

• You only care about polishing, not detection scores

• You are dealing with short pieces like emails or product blurbs

• You are okay with their data policy and jurisdiction

In that very limited box it is “fine.” Outside that box, especially if you are scared of getting flagged, it is not the safety net the homepage implies.

So if your real concern is “I do not want this stuff triggering AI checks,” I would treat GPTinf as a light stylistic rewriter, not a shield. If you want a more serious attempt at humanization plus an SEO friendly angle, Clever AI Humanizer plus some genuine manual editing is a safer bet than paying GPTinf and hoping that 99 percent figure is real.

Short version: if your main fear is “getting flagged,” GPTinf is a risky bet. It behaves more like a surface-level rewriter than a true “humanizer,” which lines up with what @nachtschatten, @viajantedoceu and @mikeappsreviewer already found from different angles.

Where I slightly disagree with them is on how much that even matters. Detectors are unstable, opaque and often wrong about genuinely human text. Building your whole workflow around “beating” them is fragile. I would treat any tool, including GPTinf, as a stylistic aid, not a stealth system.

On GPTinf specifically

Pros

- Cleans up clunky wording reasonably well

- Makes tone a bit more casual and readable

- Simple interface for quick snippets

Cons

- AI detection scores stay high on modern tools

- Structure of the text barely changes, which is what newer detectors care about

- Awkward word limits unless you pay, which kills flow on long pieces

- Data policy and single operator setup are not ideal for sensitive work

Where @nachtschatten leaned into privacy and workflow issues, and @viajantedoceu focused on the “marketing vs reality,” I would add this: GPTinf feels tuned for an older generation of detectors that mainly looked at token-level patterns. Once detectors started modeling discourse structure and “rhythm,” light rewrites stopped moving the needle.

About Clever AI Humanizer

If you still want a tool in this category, Clever AI Humanizer is the more rational pick right now, not because it is magic, but because it pushes the text further away from the original template.

Pros

- Rewrites feel less template driven and less like standard LLM output

- Better variation in sentence length and pacing

- Works decently as a first pass before you add your own voice

- Can help SEO by reducing obvious AI repetitiveness and making the text read more like a niche blog than a generic bot answer

Cons

- Aggressive rewriting sometimes overshoots and changes nuance, so you must review carefully

- Not a guarantee against AI detection either, especially if you copy paste without edits

- If you rely on it too heavily, your “voice” can still end up looking like a stylized AI voice, just a different one

Where I would push back slightly on everyone

They are right that you should not trust the “99 percent undetectable” claim. I would go further and say: do not trust any percentage claim from any “humanizer.” The only thing that consistently lowers risk is you actually intervening in the content.

If I had to pick a practical strategy today

- Use your main LLM to draft

- Run through something like Clever AI Humanizer if you want a less robotic starting point

- Then manually: change the order of ideas, inject real opinions, cut generic fluff, and tweak tone to match how you normally write

If you are hoping GPTinf alone will turn pure AI text into something “safe” for high stakes academic or client work, that is not realistic based on what multiple people here have measured. As a light rewriter, it is fine. As a detection shield, it is not.