I’ve been testing Clever AI Humanizer on some important articles and I’m worried it might be changing the original intent and nuance of my writing. I need help from anyone who has used it to understand if it really preserves meaning or if it subtly rewrites ideas in a way that could hurt accuracy, SEO, or tone. What should I look for, and are there better practices or tools to keep my content sounding natural without losing what I actually meant?

You know that moment when you paste your ChatGPT essay into an AI checker and it just slaps you with ‘99% AI’? That’s basically how I ended up poking around with Clever AI Humanizer.

I kept seeing people mention it, the devs brag that it’s “one of the best” for making AI text pass detectors, and I did not buy that at face value. So a few of us actually sat down, ran real tests, and pushed a bunch of raw AI outputs through different detectors to see if this thing is legit or just another “pay first, pray later” gadget.

Below is what actually happened, no sugarcoating.

So… what is Clever AI Humanizer?

Clever AI Humanizer lives here: https://aihumanizer.net/

Basic idea: you paste in AI-generated text, hit a button, and it rewrites it so it reads more like something a real person wrote. Stuff from ChatGPT, Gemini, etc. gets rearranged, tone shifts, sentences stop sounding like they were mass-produced in the same factory.

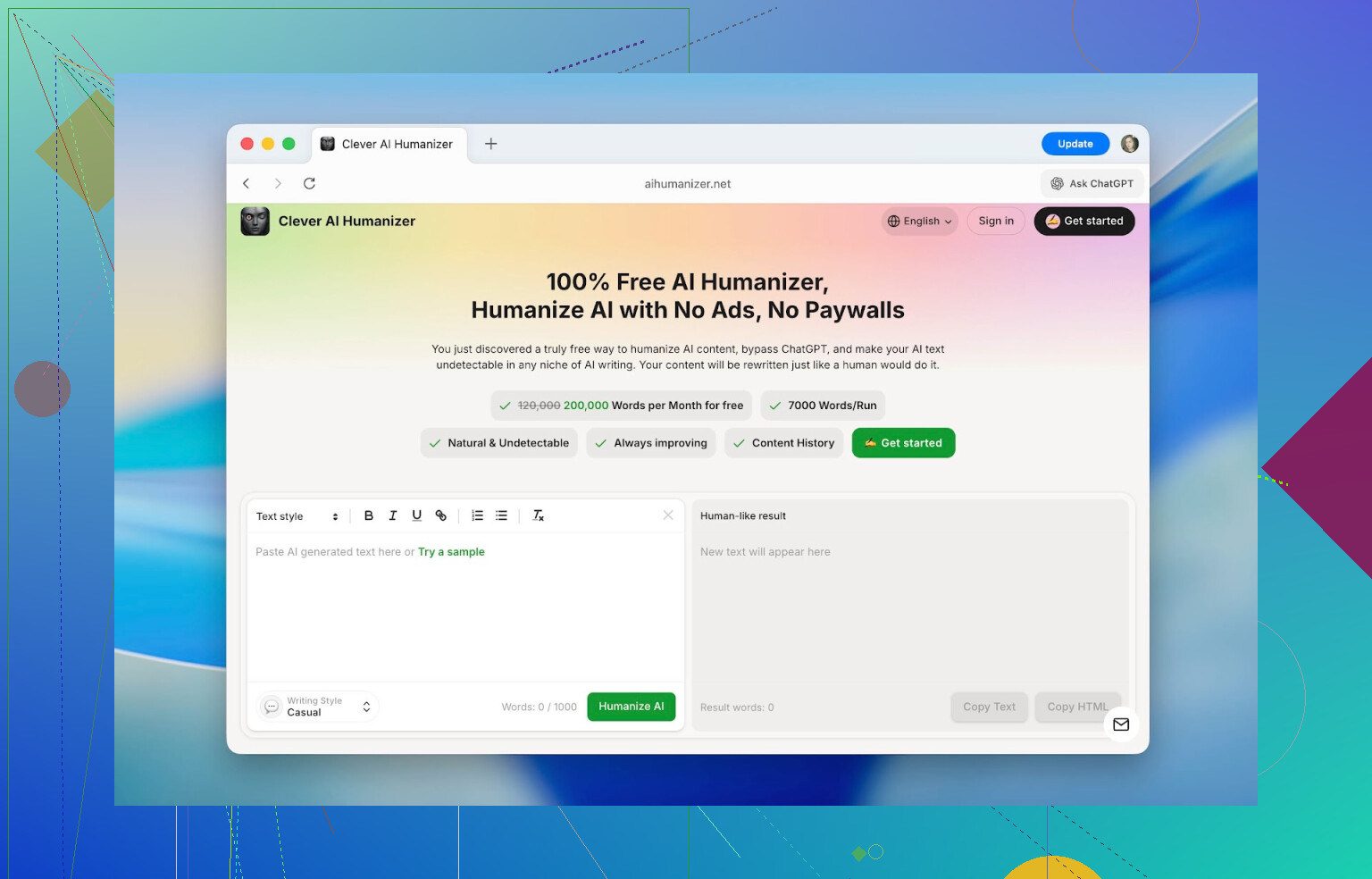

What surprised me first wasn’t even the output, it was the interface. Most “AI humanizer” sites look like they were slapped together in a weekend: tiny input box, confusing buttons, no sense someone would actually use it daily.

This one actually feels like a tool, not a side project. Big clean workspace, clear “paste here / result there” layout, easy word count tracking. No figuring out “where do I click?” It’s obvious.

On top of that: it’s free. Real free. No “you get 200 words then hit a paywall.”

- Up to 1,000 words per run

- About 7,000 words per day total

- 4,000 without an account

- Another 3,000 unlocked after a quick signup

That’s enough to process full essays, articles, or long notes, not just a couple of test paragraphs.

Key things that actually stood out

When I first opened it, I expected the usual: paste > rewrite > slight improvement > still detected as AI. But a few details ended up being more interesting than I thought.

1. Detection scores dropped a lot

We took raw ChatGPT outputs, the kind you get from a first try prompt. Nothing edited.

Detectors like ZeroGPT were tagging them as 100% AI. After pushing the same text through Clever AI Humanizer, scores dropped hard. Regularly seeing stuff like:

- 13%

- 6%

- Sometimes close to 0%

No, that does not mean “guaranteed safe forever.” Detection systems keep changing their rules, and they look for patterns, not magic words. But the difference in how the text reads and how tools react to it was not subtle.

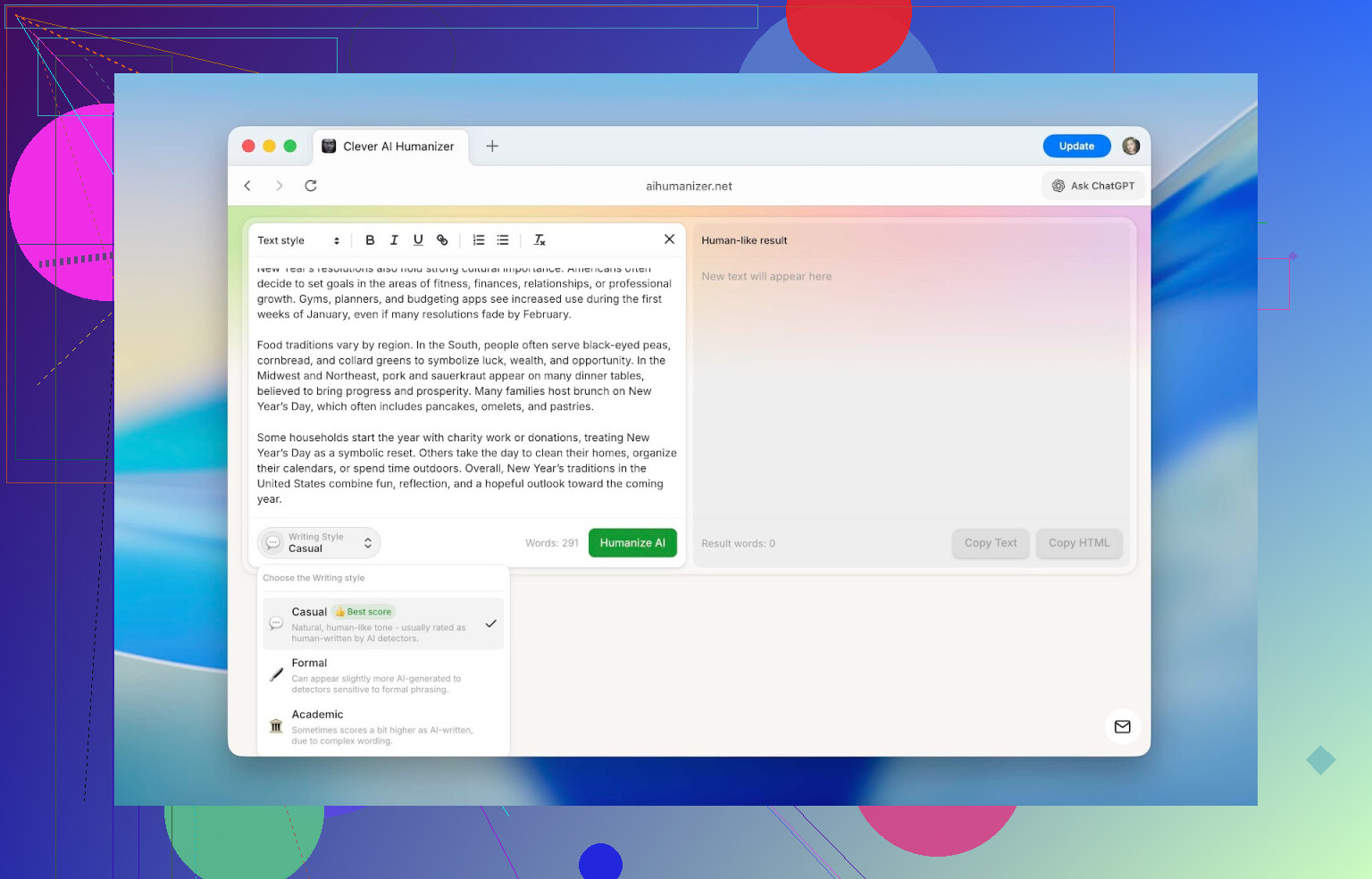

2. Different tones that actually feel different

You can pick between three styles:

- Casual

- Formal

- Academic

Casual feels conversational and a bit softer.

Formal gets more structured and neutral.

Academic leans into research-style phrasing.

The AI detection scores shifted a bit depending on the style (usually within 3–5% of each other), but nothing dramatic. For word limit reasons, we mostly tested in Casual.

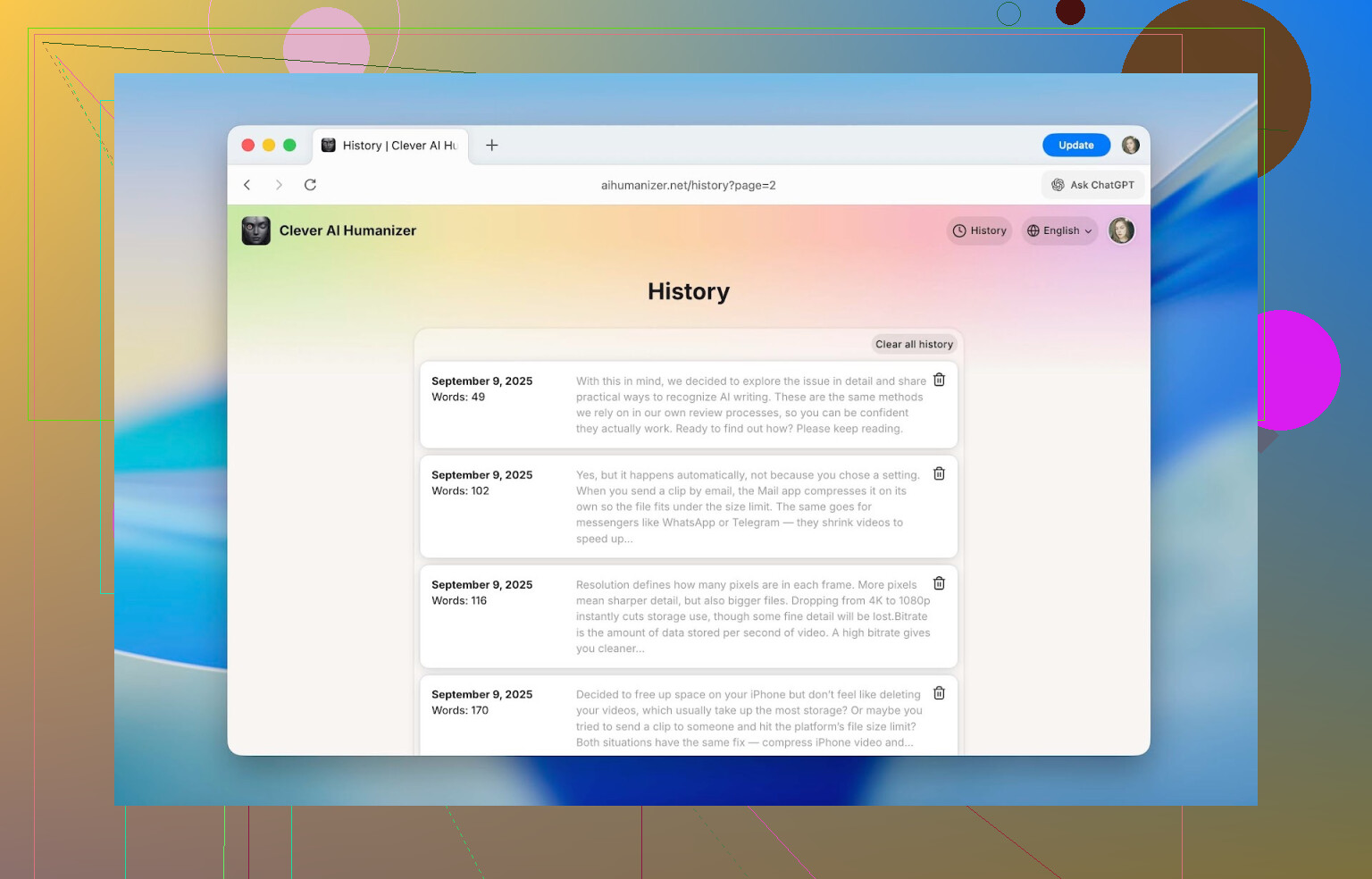

3. Full rewrite history

This was a sneaky useful one.

Once you create an account, the site keeps a log of your previous rewrites: dates, word counts, short previews. While working on this, I was able to dig up stuff I had run through it back in September, and it was all still there.

If you’re doing long projects (multiple drafts, versions, etc.), being able to find “that version I did last week” is actually nice.

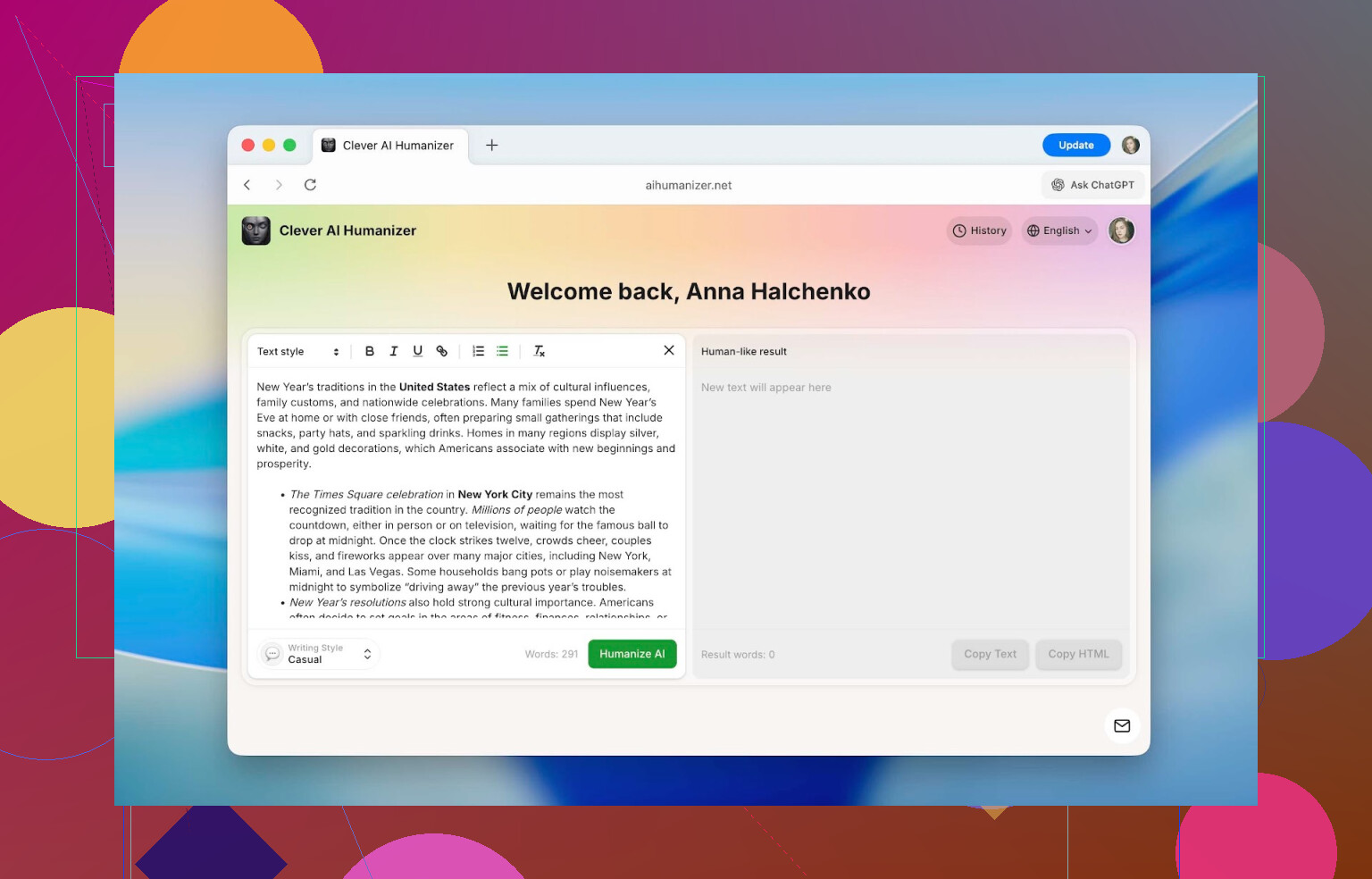

4. Keeps formatting instead of wrecking it

This is where a lot of tools annoy me. You paste a nicely formatted text and get back a wall of unformatted nonsense.

Here you can use:

- Headings

- Bold / italics / underline

- Links

- Bullet & numbered lists

And it all survives the humanization and copy-paste step.

So if you’re working on school assignments, documentation, or anything where formatting matters, you don’t have to redo it all manually afterward.

5. Works in more than just English

It supports multiple languages: French, Spanish, Italian, German, Dutch, Portuguese, Polish, and more. The actual interface is also available in several languages, so you’re not forced to fight with browser translation if English isn’t your main language.

How to use Clever AI Humanizer (step by step)

This part is the “how I actually used it” bit, not some vague marketing description.

What it does internally is their secret sauce. They have a page trying to explain it here:

https://aihumanizer.net/how-does-ai-humanizer-work

I’m not reverse‑engineering their model. I’m just showing the user-facing process.

The flow is honestly pretty simple:

-

Open the site: https://aihumanizer.net/

-

Optional but useful: click Sign In (top right).

You can use:- Apple

- Email + password

Signing in gives you more daily words and unlocks your history log.

-

Paste your original AI-generated text into the left text box.

-

At the bottom, pick your style (Casual / Formal / Academic), then hit Humanize AI.

-

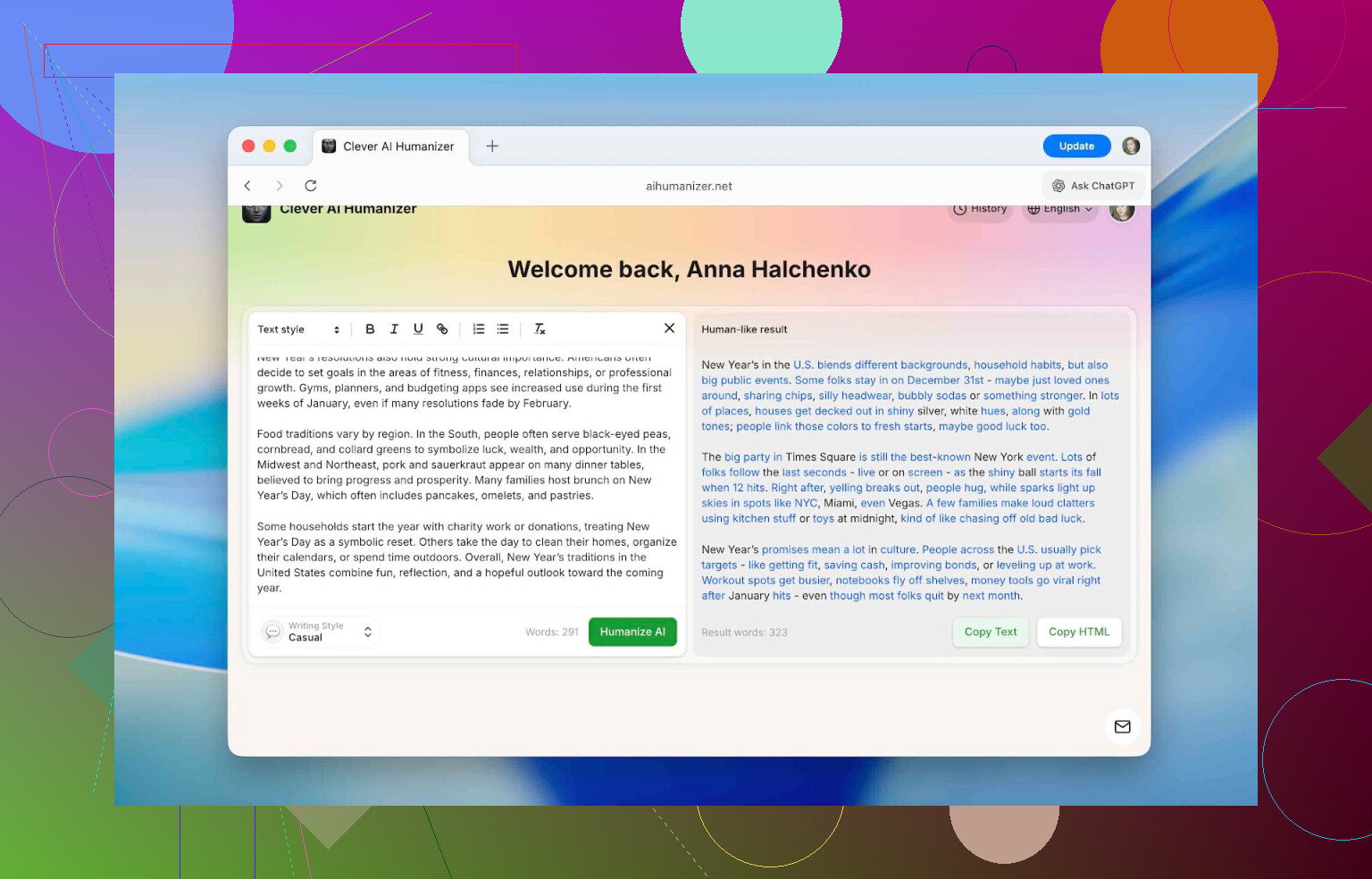

Wait a moment. The rewritten text appears on the right side.

Changes are highlighted in blue so you can see what got altered.

After that, you just copy it and drop it into your doc, website, assignment, or even back into an AI detector if you want to see the new scores.

How well does it beat AI detectors?

This is probably the part most people care about.

We tested it using several “popular” detectors that schools and companies like to throw around in docs and policies:

- QuillBot AI Checker

- ZeroGPT

- GPTZero

- Undetectable AI detector

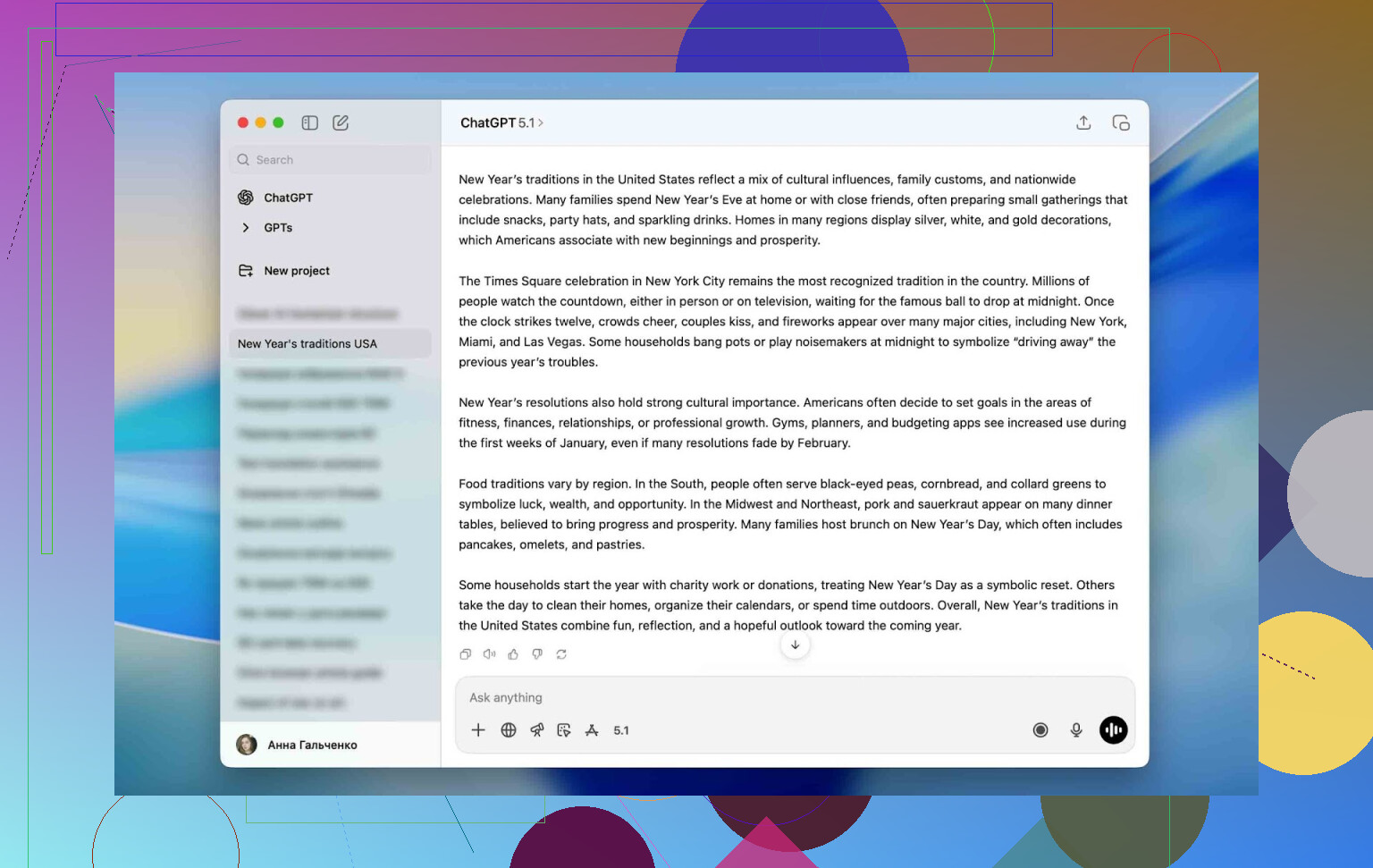

We tried to mimic a real‑world “lazy user” scenario:

-

Generate a text with ChatGPT.

Nothing fancy. Just a generic prompt that produced standard, easily detectable AI prose. -

Run that raw text through all 4 detectors.

Every single one tagged it as AI with very high scores. -

Take that same text, run it once through Clever AI Humanizer in Casual mode. No manual edits.

-

Put the humanized version back into the same 4 detectors.

Here’s how the scores changed:

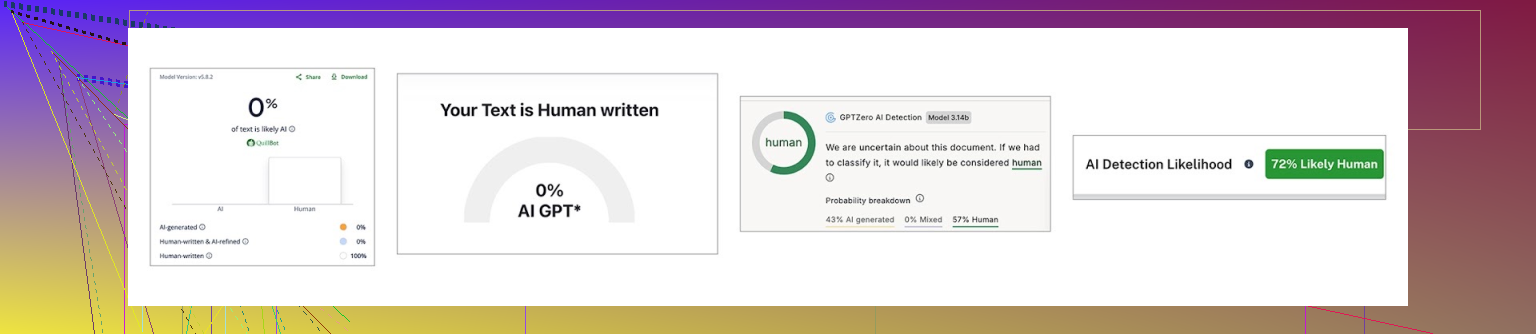

| QuillBot | ZeroGPT | GPTZero | Undetectable AI | |

|---|---|---|---|---|

| Before, % | 98 | 100 | 100 | 90 |

| After, % | 0 | 0 | 43 | 27 |

So:

- QuillBot: went from 98% AI to 0%

- ZeroGPT: from 100% to 0%

- GPTZero: from 100% to 43%

- Undetectable AI: from 90% to 27%

Not uniform, but the drop was huge almost everywhere.

We’d already seen in our LLM detector comparison writeup here:

[https://www.insanelymac.com/blog/clever-ai-humanizer-review/[sc%20name=](https://www.insanelymac.com/blog/clever-ai-humanizer-review/[sc%20name=)

that these tools don’t agree with each other anyway. They all use different signals and thresholds. None of them gives “proof,” only probability.

So the main takeaway is:

- It seriously alters the writing patterns detectors latch on to.

- But different detectors still disagree, like they always do.

Important caveat

I want to be really clear about this part:

We do not recommend using pure AI text, humanized or not, as a full submission for academic or professional work.

For testing, we used 100% AI‑generated content because you need a controlled baseline. In real life, a healthier workflow looks like:

- You write the actual core content yourself.

- You use AI as an assistant (rewording, ideas, typo cleanup).

- You run the AI‑heavy fragments through a humanizer to smooth obvious “AI voice” patterns.

That way, the piece still reflects your thinking, but you avoid detectors flagging the bits where AI helped. It’s a balance between authenticity and practicality, not a “press button to cheat” solution.

How it compares to other AI humanizers

You can praise anything in isolation. The real test is how it stacks up when you put it next to other tools that people actually find in Google.

We grabbed a bunch of popular ones you’ve probably seen mentioned:

- Humanize AI

- Originality.ai Humanizer

- Undetectable AI Humanizer

- QuillBot AI Humanizer

- AI Humanize

- Decopy AI Humanizer

Then we set some basic comparison criteria that matter in normal use:

- Pricing (free / subscription / credits)

- Monthly word limits

- Extra features

- How much they lower detection scores, tested on the same starting text

We reused the exact same ChatGPT sample as before and ran every humanized version through ZeroGPT for consistency.

Here’s the summary:

| Metrics | Clever AI Humanizer | Humanize AI | Originality.ai Humanizer | Undetectable AI Humanizer | QuillBot AI Humanizer | AI Humanize | Decopy AI Humanizer |

| Pricing model | Free | Light $19 / Standard $29 / Pro $79 | $14.95/month or pay-as-you-go $30 | from $19/month | $9.95/month | Basic $15 / Pro $25 / Unlimited $40 | Free |

| Monthly word limit | 210000 | 20000 | 200000 | 20000 | Unlimited | 15000 | Unlimited |

| Additional features | Formatting preserved, rewrite history, 3 tone modes | Humanization style | Plagiarism/AI detection, scan history, 4 tone modes, control of the output text length | – | Rewrite history | 8 tone modes, rewrite history | 8 tone modes, control of the output text length |

| Detection drop in tests (ZeroGPT) | 0% | 100% | 100% | 17.76% | 65.12% | 53.74% | 62.4% |

Disclaimer: some of these tools cripple their free tiers so badly you can’t really test them in a realistic way. In those cases, we used the cheapest paid tier to get numbers that actually match how someone would use them for real work.

Now, a lot of little things matter (interface, languages, formatting behavior). But if we’re honest, 2 things matter most:

- How much the tool cuts AI detection scores.

- How much that costs you.

On those two axes, Clever AI Humanizer came out looking very strong:

- Lowest detection scores in our ZeroGPT tests

- No subscription required

- Monthly word allowance that’s actually usable

The most disappointing part of the testing was QuillBot AI Humanizer and Originality.ai Humanizer. Both have big names and paid plans, but the output still looked nearly 100% AI to ZeroGPT. That kind of defeats the purpose if your goal is specifically to reduce detection.

In our runs, the top two options were:

- Clever AI Humanizer

Best detection performance, free, with nice extras like history and formatting support. - Undetectable AI Humanizer

Solid performance, but fully paid and the price changes depending on how many words you need. Expect a starting point around $19 per month.

Where this actually makes sense to use

People immediately jump to “homework” when they hear “AI humanizer,” but it’s not limited to education. Basically, anywhere AI-generated writing sneaks in and starts to sound the same as everyone else’s, this kind of tool helps.

Some typical use cases:

- Cleaning up AI-heavy parts in essays, reports, homework, and class presentations.

- Rewriting social posts: Insta captions, Threads updates, TikTok / YouTube descriptions.

- Making product descriptions on marketplaces feel less generic and more trustworthy.

- Fixing blog posts or website copy that started as AI drafts.

- Polishing internal company docs that were drafted with AI help.

- Adapting guest posts or sponsored articles so they match the tone of the publication.

All of these scenarios have the same pain point: the “AI voice” is too obvious. Clever AI Humanizer helps strip that away in one pass so you’re not manually rephrasing line by line.

Final verdict after actually using it

After running a bunch of tests, my takeaway is pretty straightforward:

- The devs’ claims weren’t total hype.

- It really does cut AI detection rates across several different tools.

- It does this while being free and not uselessly limited.

- Daily ~7,000 words is plenty for multiple essays or several medium projects.

- Features like history and tone selection are actually practical, not just bullet points for the homepage.

That is why it ended up at the top of our ranking here:

[https://www.insanelymac.com/blog/clever-ai-humanizer-review/[sc%20name=](https://www.insanelymac.com/blog/clever-ai-humanizer-review/[sc%20name=)

If your goal is to make AI-assisted writing sound more like you and less like a generic chatbot, it’s absolutely worth trying.

Just don’t fall into the trap of letting tools replace your thinking. Use AI (and humanizers) as support: to polish, to rephrase, to help structure. The ideas and arguments still need to be yours.

If you’ve used Clever AI Humanizer yourself or tried to deal with humanized AI content in any context, you can drop your experiences here:

https://www.insanelymac.com/forum/

The whole topic is evolving fast and honestly worth an ongoing discussion.

Short answer: yes, it can shift meaning and nuance, especially on more careful / “voice-heavy” pieces, but it’s not random and you can keep it under control if you treat it as a draft editor, not a one-click replacement.

A few things I’ve noticed using Clever Ai Humanizer on longer, “important” stuff:

1. It’s very aggressive at the sentence level

For simple, factual text, it usually keeps the core meaning. Where it starts to drift is:

- qualifiers: “likely,” “somewhat,” “in rare cases” sometimes vanish or get softened/strengthened

- stance: “I strongly disagree” might turn into “I don’t really agree,” which is not the same thing

- hedging: academic tone can turn into something more confident than you actually intended

So yes, it can quietly change how strong or cautious you sound.

2. Tone presets can distort intent

I don’t fully agree with @mikeappsreviewer that the tone modes are a pure win.

- Casual sometimes adds friendliness where you wanted distance or neutrality

- Academic can over-formalize personal opinions so they sound like claims of fact

If the article hinges on subtle tone shifts (critical vs supportive, cautious vs assertive), double-check every paragraph after humanizing.

3. It sometimes “fills gaps” like a regular AI

Clever Ai Humanizer is better than most at staying close to the text, but if a sentence is vague, it will occasionally “clarify” it in a way that chooses one interpretation of your idea. That’s where intent can really get bent.

4. How to keep control over meaning (what’s actually worked for me)

-

Use it in small chunks, not entire articles

Paste 1–2 paragraphs at a time, especially for sections where your argument is subtle. Compare before/after. If something feels off, undo and re-run or tweak manually. -

Lock in key phrases

Any phrase that is legally, technically, or emotionally important (e.g. “in my personal opinion,” “in very limited circumstances,” “we are not liable for…”) I literally reinsert by hand if the tool alters it. -

Skim specifically for these failure points

After humanizing, quickly scan for:- numeric info (dates, percentages, quantities)

- negations: “not,” “never,” “no longer”

- qualifiers: “often,” “rarely,” “sometimes,” “under certain conditions”

Those are where meaning flips happen.

-

Don’t rely on it for rhetorical or creative sections

Openings, closings, and key argument paragraphs I keep mostly mine. I’ll sometimes humanize a middle chunk, then blend it back into my original voice.

5. Good use case vs bad use case for you

- Good: smoothing clearly AI-ish drafts, cleaning up repetitive wording, helping with “I know what I mean but this feels stiff” sections.

- Risky: opinion pieces, legal/policy text, research summaries where nuance matters more than “passing” as human.

So: Clever Ai Humanizer is fine to use on important articles, but only if you treat its output as a first revision and not something you paste and ship. If you’re already worried about intent and nuance, your instinct is right: keep your original open side by side and treat every change as something you have to approve, not something the tool “must know better” on.

Short version: yes, Clever Ai Humanizer can shift meaning, but how bad it gets is mostly on how you use it, not the tool itself.

I’ve had a pretty similar experience to what @mikeappsreviewer and @vrijheidsvogel described, but I don’t fully agree with them on “just treat it like a draft editor and you’re fine.” That’s mostly true for generic stuff. On opinionated, carefully worded pieces, it can absolutely sand off the edges of what you’re trying to say.

Where I’ve seen it go wrong:

- It “balances” strong takes. A sharp line like “this policy is harmful and misleading” turned into something like “this policy can be confusing.” That’s not nuance, that’s declawing.

- It loves smoothing ambiguity. If your sentence is intentionally open ended, it sometimes picks a side for you, which quietly shifts intent.

- It occasionally drops those tiny words that matter a lot: “only,” “rarely,” “mostly,” “not necessarily.” One missing “not” and your whole argument flips.

Where I don’t think it’s a big threat:

- Straight factual explanation where tone is secondary

- Background sections of an article

- Transitional paragraphs where you’re not making key claims

What’s actually worked for me to keep it under control, without redoing all the checking steps others already listed:

- Use Clever Ai Humanizer mainly on structurally boring parts: intros that just set the scene, definitions, “this is how X works” chunks. Keep your core argument and conclusion mostly in your own words.

- After humanizing, I re-read only the “spicy” sentences: anything that expresses judgment, criticism, or commitment. If those still sound like me, I don’t worry much about the rest.

- Any sentence where you chose the wording very carefully? Don’t send it through at all. Paste around it or reinsert it afterward.

If what you care about is preserving intent more than “passing” detectors, Clever Ai Humanizer is still useable, just don’t click once and ship. Think of it as a strong stylistic filter: amazing for cleaning obvious AI-voice, kind of dangerous for the parts of your article where exact phrasing is the whole point.