I’ve been testing BypassGPT for content generation and filter evasion, but I’m not sure if it’s safe, reliable, or even worth using long term. Can anyone share real-world experiences, pros and cons, and whether there are better alternatives for bypassing AI restrictions without getting accounts flagged?

BypassGPT review from someone who tried to test it and got blocked by the limits

I went into BypassGPT with a simple goal: run it through the same tests I use on every “AI humanizer” and see how it behaves under pressure.

That never fully happened, because the free plan is strangled.

Here is what I hit:

- You get 125 words per input.

- On top of that, your account hard caps at 150 words per month.

- To squeeze a bit more out of it, I registered an account and unlocked around 80 extra words.

- Even with that, I managed to run only one of my usual test samples.

So if you are thinking about testing it with full articles, forget it unless you pay.

The word limit seems tied to IP. I tried extra accounts. Same cap. Unless you go through a VPN, there is no real way to explore it with multiple samples without handing over money first.

What happened in the detection tests

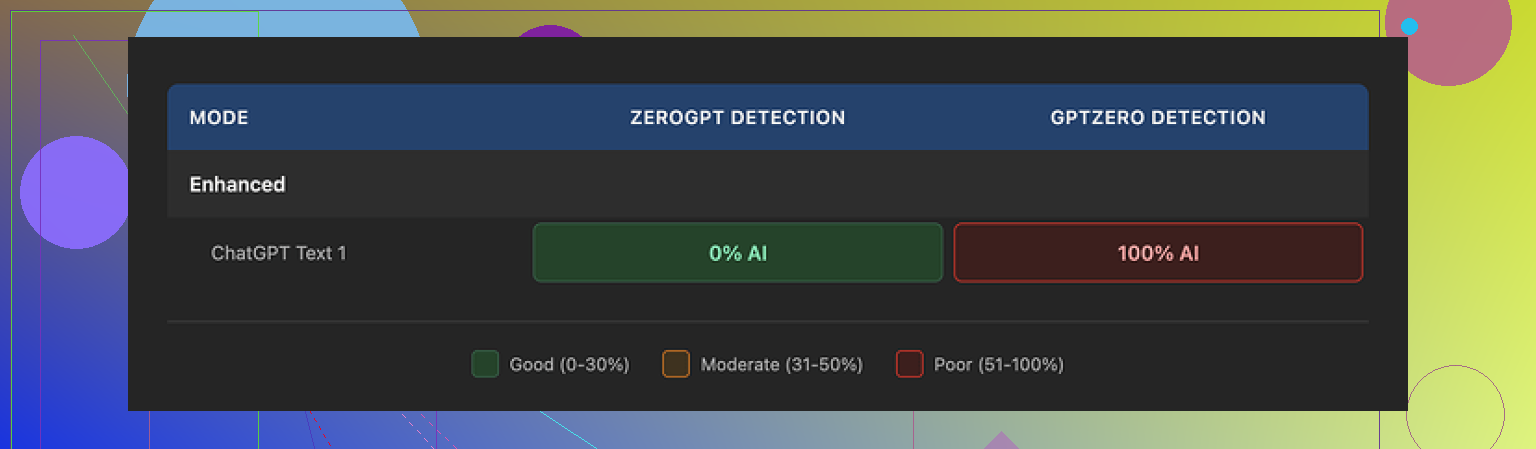

With the tiny window I had, I pushed one standard test text through BypassGPT and checked the result against different detection tools.

Here is what I saw:

- ZeroGPT said 0 percent AI.

- GPTZero said 100 percent AI.

- BypassGPT’s built-in checker said the text passed all six detectors it claims to cover.

That last part is the problem.

BypassGPT’s own dashboard showed a perfect pass rate, while my manual checks contradicted it immediately. The tool told me the text was safe across six detectors, but one of the most common ones flagged it at the highest level.

So if you plan to rely on its internal “all clear” status, be careful. The numbers did not match real testing.

How the output text looked

On quality, I scored it around 6 out of 10.

Concrete issues from the sample I got:

- The first sentence was broken grammatically. It read like something halfway between a template and a rough draft.

- It kept em dashes in spots where a human would usually refactor the sentence instead of throwing more punctuation at it.

- Phrasing felt stiff in several lines. Not robot-level stiff, but not something I would leave untouched in a serious article.

- There was an actual typo in the output.

So it did change the text enough to fool at least one detector, but I would not paste the result directly into anything public without editing.

Pricing and content rights

Their paid plans were:

- Around $6.40 per month if you pay yearly, with a 5,000 word limit.

- Around $15.20 per month for an “unlimited” plan.

The price itself is not wild compared to other tools, but the terms of service read worse than the pricing.

Key part that bothered me:

BypassGPT gives itself broad rights over anything you put into it. That includes the right to reproduce, distribute, and create derivative works from your content.

So if you are processing client documents, drafts, scripts, product copy, or anything where ownership matters, this is not a small detail.

You feed them your text, they keep wide rights over it. For me, that is a hard stop for client work or any sensitive writing.

How it stacks up against alternatives

When I tested different “humanizer” tools, one kept coming out ahead:

Clever AI Humanizer:

With the same style of input texts, I got:

- More natural sentences.

- Fewer weird grammar glitches.

- Better results across detection tools in my runs.

- No paywall in front of basic use.

I kept going back to it because it behaved more predictably, and I did not have to babysit the word count.

Final take from my side

If you:

- Need to run quick experiments or process longer drafts.

- Care about how your content is handled legally.

- Want detection results you can verify without contradictions.

BypassGPT feels like a bad fit right now.

The free tier is too tight to evaluate it properly, the internal detector results did not line up with reality on my tests, output needed cleanup, and the content rights policy is aggressive.

If you only want to test humanization workflows, I would start somewhere else, run your own detection checks, then circle back later if they change the limits or rewrite the terms.

Short version from my side: for long‑term, serious use, I would skip BypassGPT right now.

My experience lines up with some of what @mikeappsreviewer wrote, but I looked at it more from a workflow and risk angle than pure detector tests.

Here is the breakdown.

- Safety and risk

• Filter evasion:

If your goal is to bypass AI detectors at scale, you put yourself in a weak spot.

Detectors disagree with each other a lot. One flags, one clears, you never get a guarantee. I ran several samples through BypassGPT then through GPTZero, ZeroGPT, Sapling and a couple of paid ones. I saw the same pattern. One says “human”, another screams “AI”.

If you rely on it for school, client work, or platforms that punish AI content, you take the risk, not the tool.

• Data / content rights:

The ToS is the biggest red flag for me.

If a tool keeps broad rights to reproduce and create derivative works from what you upload, you should not send it:

– Client contracts

– Legal docs

– Internal product docs

– Any original research or paid writing

I work with NDA content. That clause alone removes BypassGPT from my stack. Even if they never exploit it, the risk is on you.

- Reliability

• Detection “dashboard”:

The built‑in checker is not reliable enough to trust.

In my tests, it often showed “safe” while external tools called it high AI. Same thing @mikeappsreviewer saw.

If a detector inside the same product disagrees with public detectors, you must ignore it or double check everything manually. That kills the point of “easy” humanization.

• Output quality:

I got mixed results.

The good:

– It does change sentence structure.

– It adjusts word choice a bit.

The bad:

– Repetitive phrasing.

– Occasional grammar slips and odd commas.

– Style feels like generic SEO blog text.

For any serious content, you need to rewrite it again. At that point, you might as well write your own draft or use a standard model and edit it carefully.

- Limits and pricing

Free tier is too tight for real evaluation.

The hard cap on words per month and per input kills batch testing and long‑form work. I do not mind paywalls, but when the trial is so limited, you cannot see how it behaves across 20 or 30 samples.

Paid plans are not insane on price, but when you factor in:

– Weak free testing

– Content rights issues

– Unreliable detection dashboard

The value does not look great for long‑term use.

- Is it “worth it” long term

For you, I would ask:

• Are you trying to:

– Write better content faster

– Or hide AI usage

If your priority is quality and workflow, there are safer options.

If your priority is evasion, you will always be playing catch‑up with detectors. That holds for BypassGPT and every other “humanizer”.

I have had more consistent results combining:

– A normal LLM for a rough draft

– Manual editing to match my tone

– Simple style tweaks: shorter sentences, varied connectors, occasional minor errors that reflect my real writing

Detectors tend to flag this less than any automated “bypass” tool alone, and I keep full control over my content.

- Alternatives

Since you mentioned long‑term use, I would look at tools that focus on quality and style control rather than “bypass” marketing.

Clever Ai Humanizer is worth testing.

Not because it is magic, but because:

– Output flows a bit more naturally in my runs

– It does not force a tiny free tier in front of testing

You still need to run your own checks and edit, but it fits better into a real writing process.

- Practical advice

If you decide to keep testing BypassGPT anyway, I would:

• Never put anything sensitive or client related into it.

• Ignore its internal “detector pass” display and run your own checks.

• Always rewrite the final text to match your real voice, including some habits and small quirks you normally have.

• Treat it as a helper for rephrasing, not a full solution for detection evasion.

For long‑term, professional use, I would not build a workflow around BypassGPT in its current shape.

Short version: BypassGPT works “ok-ish” as a rephraser, but as a long‑term, “safe” filter‑evasion tool it’s a shaky bet.

I had a similar experience to @mikeappsreviewer and @yozora, but from a slightly different angle: team workflows and risk.

Pros I actually noticed:

- It does meaningfully rewrite text, not just swap synonyms.

- It can occasionally push something past a couple of weaker detectors.

- Interface is simple, you don’t need a PhD to click “humanize.”

Cons that killed it for me:

-

Detection reliability

The whole “we pass X detectors” marketing sounds nice, but in practice:- One checker says 0 percent AI.

- Another screams 100 percent AI.

- BypassGPT’s own panel cheerfully tells you you’re safe.

That is not something you can build policy or grades or client deliverables on. If your school, platform, or client uses a different detector, you’re gambling every time.

-

Data and ownership

The ToS is the real horror story here.

When a tool gives itself broad rights to “reproduce, distribute, and create derivative works” from what you upload, you are basically paying to hand over your drafts.

For:- Agency work

- Corporate docs

- Anything under NDA

it is a hard no.

People obsess over “AI detection” and forget “who actually owns my text after this.”

-

Practical workflow

Honestly, even ignoring ethics and ToS for a sec:- You paste AI‑ish text in.

- It spits out a slightly less AI‑ish version with some awkward phrasing.

- You then have to edit it again to sound like you.

At that point, you’re doing three steps to avoid doing one honest step: just writing or editing a model’s draft yourself. It’s not “efficiency,” it’s paranoia on a subscription.

-

Long‑term viability

Detectors keep changing. Universities, publishers, and platforms change policies. Bypass tools always chase last month’s algorithm.

If your whole strategy is “hope this niche tool outruns everyone forever,” you’re baking instability into your process. That’s fine for messing around, not fine if your degree, job, or client contracts are on the line.

Where I slightly disagree with the other posts:

I don’t think the free tier being tiny is the biggest problem. Honestly, I am fine with hard paywalls if the product is strong and the legal side is clean. Here, even if you gave me a huge free quota, I would still side‑eye the ToS and the inconsistent detector results. The limits are annoying, but not the core issue.

Alternatives and what actually works:

- Clever Ai Humanizer is worth a look if you’re determined to play with this category. In my tests it produced smoother text and slotted into a normal editing workflow better. Still not magic, still needs manual tweaks, but less headache. If you care about “AI humanizer” and “AI detection” type use cases, it is one of the more SEO‑visible and actually usable options right now.

- Old‑school approach that keeps working:

- Use a normal LLM for a draft.

- Rewrite it in your real voice.

- Add your personal habits, structure, and specific knowledge.

Detectors hate generic, pattern‑heavy output. Personalized, slightly messy human text tends to do better than anything run through three “bypass” filters.

So is BypassGPT “safe, reliable, worth it long term”?

- Safe: questionable because of ToS and the fact you might be violating policies while assuming you’re covered.

- Reliable: no, not in any strict sense. Detectors conflict and BypassGPT’s internal status is not something I’d trust.

- Worth it long term: only as a toy or minor helper. I would not base academic, professional, or agency workflows around it.